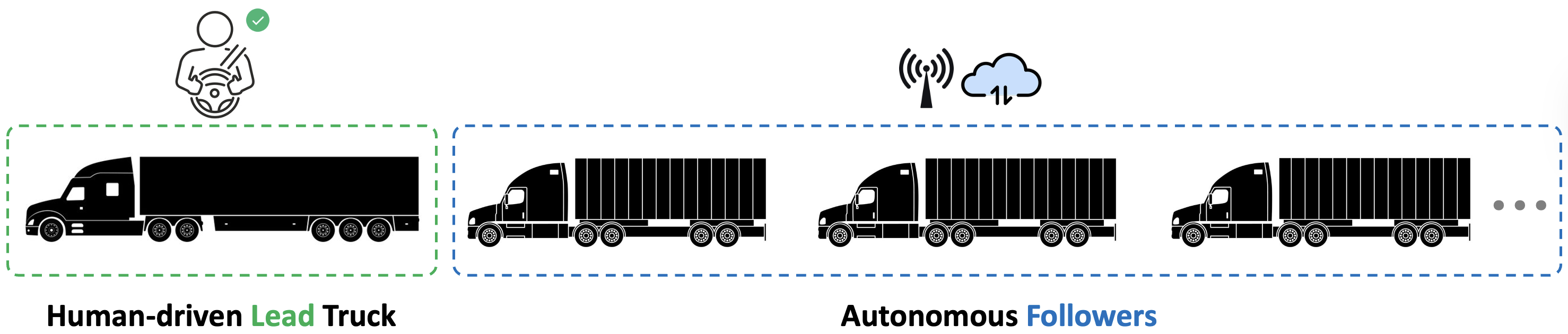

1. Human-Led Platooning with Autonomous Followers

1.1 Concept Design: self-propelled heavy-duty trailer with hybrid diesel-electric powertrain and independent wheel corner modules

The first component of this project is an innovative design for a self-propelled heavy-duty trailer intended to platoon behind a lead truck. This design aims to push beyond conventional passive trailers by giving the trailer intelligence and propulsion of its own. This self-propelled trailer carries its own hybrid diesel-electric powertrain with high efficiency and low emissions. This hybrid arrangement provides reliable power for long-haul transportation while electric motors deliver high torque on demand for uneven roads and steep grades. To realize terrain adaptability and maneuverability, the trailer employs independent wheel corner modules at each axle. Each module integrates an electric traction motor, a steering actuator, a suspension, and a braking system. Individual by-wire control replaces conventional driveshaft or steering rack, avoiding central mechanical linkages. An affordable and efficient sensor suite is designed to achieve reliable and safe autonomous following using low-cost sensors and lightweight onboard processing. Each sensor suite is a rugged module equipped with LiDAR, radar, and stereoscopic cameras, along with a local processing unit. Placing these suites at strategic locations (e.g. front hitch area, rear of trailer, and sides) gives the trailer a 360° awareness of its surroundings. As the first step, a scaled pure-electric prototype is under developing to demonstrate the feasibility of the proposed concept under controlled conditions. The prototype integrates a lightweight chassis, compact electric motors, drive-by-wire systems, and two sensor suites. On-campus trials will focus on low-speed maneuvering and system examination to verify the integrated hardware-software framework and ensure safe operation.

1.2 Perception Systems

A multi-sensor fusion perception system is developed to give follower vehicles a real-time understanding of their surroundings. The sensor data obtained from cameras, LiDARs, radars, IMUs, and wheel encoders are combined for robust perception, enabling lead vehicles detection, lane detection, road condition recognition, obstacle detection, etc. Specifically, autonomous truck perception systems in Canada should be designed to detect and respond to wildlife crossings, which are common on rural roads, particularly during dusk and nighttime. A wildlife image dataset will be collected and annotated for training a lightweight YOLO-based wildlife detector to identify animals on the road. Meanwhile, a classical computer vision pipeline is developed to identify lane markings according to their types (e.g., dashed/solid, white/yellow) and road curvatures. However, the numerical or geometric information are excellent for machines but hard for humans to interpret quickly. A vision-language model can bridge that gap by converting low-level outputs (e.g., pixel masks, curvature parameters, lane type labels) into human-readable explanations, helping with transparency, debugging, and human trust. Instead of manual visualization, this interface explains in plain language, making safety assessment much faster. All outputs of these perception components will be integrated to provide an overall situational awareness for the autonomous platoon.

1.3 Decision-Making Considering Human-Machine Interaction

In parallel, the decision-making layer is designed to ensure the autonomous followers make safe and efficient choices based on perception inputs and the lead vehicle’s motions. This includes designing how the autonomous system alerts the human lead driver and defining how it interprets and acts on the driver’s commands or unexpected maneuvers. A game-theory-based decision-making framework is formalized to handle human-in-the-loop uncertainty and environmental disturbances, contributing new insights on balancing autonomy with human inputs. In this hierarchical framework, the human driver is modeled as the leader, while the autonomous trailers act as followers. The leader determines the optimal driving strategy based on road and environmental conditions, while the followers compute their optimal responses by minimizing tracking errors, ensuring safety distances, and optimizing energy efficiency. The formulation enables a bi-level optimization process, where the leader’s strategy anticipates the followers’ best responses, and the followers adaptively adjust their control inputs to maintain formation stability. This structure naturally captures interactions in human-machine collaboration, where human driving behaviors introduce stochastic and time-varying uncertainties. To handle such uncertainties, robust and adaptive control terms will be incorporated into the lower-level (follower) optimization, while learning-based estimators will refine leader intention and environmental modeling over time. Overall, this work aims to provide a mathematically grounded mechanism for balancing autonomy with human inputs, enhancing safety, trust, and coordination.

1.4 Data-Driven Learning-Enabled Leader-Follower Platooning Control

At the core of the proposed framework is a leader-follower control strategy that treats the fleet as a cohesive, cooperative system, ensuring stability, safety, and scalability in diverse operating conditions. Technically, the human driver in the lead truck defines the reference trajectory, while each autonomous trailer maintains precise alignment through a virtual tractor model that tracks a moving reference point projected behind the vehicle ahead. To ensure adaptability, an adaptive control approach is developed using real-time model estimation and gain scheduling to respond to variations in payload, terrain, and weather. For example, the follower may automatically increase following distance on slippery surfaces or modulate acceleration under heavy loads. Building upon this foundation, a dual control architecture that balancing short-term control objectives with long-term learning will be integrated with reinforcement learning to address human-machine interaction and human’s behavioral uncertainty. Since the platoon comprises both human-driven and autonomous units, understanding and modeling human-machine trust becomes crucial. On one hand, when human drivers experience automation that aligns with their preferred driving style, they report higher acceptance and comfort. This indicates that capturing and replicating human driving styles not only improves system realism but also enhances driver trust and technology acceptance. On the other hand, autonomous followers must continuously evaluate the safety of the human driver’s maneuvers and adapt accordingly, either by following or learning the human behavior over time. This bi-directional adaptation reflects a mutual trust framework between human operators and automated agents. The RL-based policy serves as an intelligent supervisory layer on top of the base controller, enabling the system to refine its responses through experience and to handle uncertainties and nonlinearities that are difficult to encode in traditional rule-based control.

More experimental studies can be found at: A Scaled Three-Vehicle Platooning Platform.

1.5 V2V Communication in Remote Areas with Limited Infrastructure

Existing truck platooning systems rely heavily on GPS and cellular networks and are designed almost entirely for predictable highway conditions. These constraints make them unsuitable for remote regions, where GPS signals are limited, cellular service is minimal, and environmental conditions are far less predictable than on highways. Having a reliable V2V communication enables the autonomous followers to mimic the real-time control sequence from the leading human driver, who does not necessarily rely on GPS or any infrastructure. This research work is supported by strong industry collaboration with Audesse Automotive Inc., who provide automotive-grade Vehicle Control Units (VCUs) and development tools.

2. TruckSafe: A Physics-Informed Co-Driver System

3. Air-Ground Collaboration in Forestry

The most significant benefit of air-ground collaborative sensing lies in its ability to substantially enhance tree inventory workflows. Airborne camera and LiDAR rapidly cover large forested regions and capture canopy-scale structural attributes, while ground robots operating beneath the canopy provide high-precision stem-level parameters such as diameter at breast height (DBH), tree height, stem curve, and wood volume. When fused, these datasets form a complete representation of forest structure that neither platform can achieve alone. Importantly, the integrated point clouds and extracted attributes can be stored in LAS/LAZ formats, enabling the construction of a long-term, digital forest database. Such a database supports a wide range of applications in both environmental preservation and timber harvesting, contributing to sustainable and data-driven forest management.

4. Human-Led Cooperative Transportation Systems: From Truck Platoons to Coastal Vessel Fleets